The Fog Beyond the Cloud

One perennial question in technology circles is where best to host our workloads: on-premises or in the cloud? The two camps seem increasingly divided. Reports of companies swinging back towards on-premises infrastructure to escape rising cloud costs appear regularly. At the same time, many organisations proudly declare themselves cloud-first, while others settle somewhere in the middle by claiming to have a cloud-native approach.

The real question should no longer be where a workload is hosted. It should be how we can securely parcel it up so that the location becomes almost irrelevant. Achieving this would liberate our compute resources to go wherever the future demands.

When most people think about data centres, storage, application hosting, AI training or inference, they picture secure facilities with airlock doors, dozens of aisles filled with racks of blinking servers, false floors, extensive cable trunking and no humans in sight. There will probably always be a role for these concentrated hubs of power. Yet if you pause and consider where compute actually lives today, the picture changes dramatically.

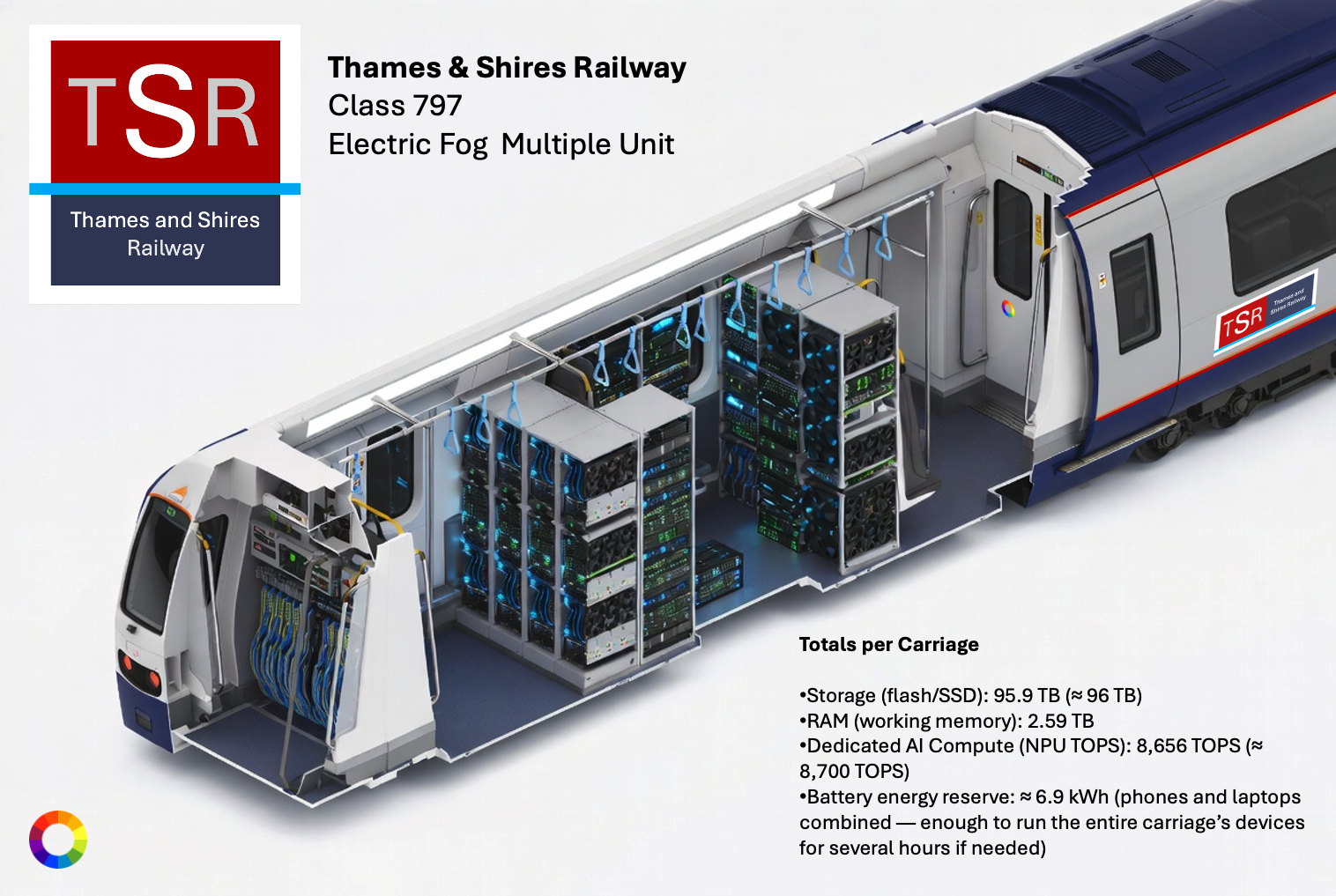

Consider a typical peak-hour commuter train heading into London. Many of these services run with 12 carriages, and during the morning rush each carriage can carry 110 to 150 passengers. Nearly every person has a smartphone, and more than half also carry a laptop.

For those who have not yet seen where this is heading, here it is in plain terms. The average commuter today carries more on-device compute in their bag or pocket than entire supercomputers possessed in the year 2000. On a busy 12-carriage train with 1,500 to 1,800 passengers, that adds up to roughly 0.75 to 1 petabyte of storage and 80,000 to 100,000 or more TOPS of collective AI compute power. This floating supercluster sits largely idle while passengers scroll through feeds or listen to podcasts.

Now multiply this scene across every busy line into London, then across all the other major cities in the UK and around the world. The scale of latent computing resources, in the form of storage, memory and processing power becomes colossal. This is before we even consider the powerful desktop machines sitting idle in homes and offices, including specialist AI chips in Apple computers. It is also before we account for the more than six million Tesla’s on driveways worldwide, each equipped with advanced AI computers, their own air conditioning systems and large batteries ready to supply power.

Then there are the emerging data centres in space. Satellite constellations like Starlink already operate in a giant network around 550 kilometres above us, adding yet another layer to this distributed computing fabric. The upcoming generation of satellite constellations will have AI chips on board taking in the free and abundant solar energy and efficiently radiating heat away, unlike ground data centres which use over a third of total energy on cooling alone.

In other words, the future lies in the fog. Fog computing, or edge computing if you prefer the term, will be the dramatic mechanism by which this vast pool of latent resources is mopped up, orchestrated, parcelled out, and put to productive use. Whether that means selling spare cycles, running distributed AI inference or supporting new classes of applications, the potential is enormous.

The key to unlocking this potential is the development of systems capable of securing individual workloads, orchestrating activity across wildly different devices, balancing loads intelligently, and ensuring availability, observability and detailed metrics. These systems must protect data rigorously while storing only what is necessary, and they must remain extremely lightweight.

Importantly, these are capabilities we should be building today, whether our current workloads sit on-prem or in the cloud. The principles of cloud-native design, applied thoughtfully, prepare us for this more distributed world.

The real shift in thinking is moving away from obsessing over the physical location of our compute. Instead, we need to focus on hosting workloads efficiently and securely, whether they run in a vast facility in the Arctic, 340 miles above our heads in orbit, or in the pocket of someone in Brazil. This change in mentality will deliver the greatest dividends. It frees organisations from the constraints of fixed infrastructure decisions and allows innovation to follow the data and the users wherever they are.

Fog computing does not replace traditional data centres or the cloud. It adds a powerful new layer that makes the best use of everything available. In many cases it will reduce pressure on centralised facilities and lower overall energy consumption. Latency-sensitive applications, from real-time AI inference to industrial control systems, stand to benefit enormously. Privacy improves when sensitive data never leaves the local device.

Of course, challenges remain. Security across such a distributed environment demands new thinking. Connectivity cannot be guaranteed everywhere, so systems must handle intermittent links gracefully. Orchestration at planetary scale will require clever new tools. Yet the building blocks already exist in various forms, and the pace of development in this area is impressive. Data governance and sovereignty must be considered, even if its existence is ethereal and fleeting.

The organisations that start preparing today by adopting portable, secure and observable workloads will find themselves best placed for whatever comes next. The fog beyond the cloud is not a distant vision. It is already forming around us on every crowded train, in every parked electric car, and across the skies above us. The question is no longer whether this future will arrive, it is here now. The question is who will be ready to make the most of it.