The MCP Problem

Organisations today face strong pressure to adopt AI tools to stay competitive, the new refrain being ‘AI first’, the mantra that if AI is not fully embraced that the company will be left behind in favour of those who go all-in. Solutions like Claude from Anthropic stand out for their ability to process complex information, support better decision making, and drive operational efficiency for corporate customers. Many companies choose the Model Context Protocol, known as MCP, to connect these AI models to their internal data and systems. MCP uses a client-server architecture that enables rich, dynamic interactions between the AI and enterprise tools. Although this design works efficiently for rapid development and testing, it creates several serious challenges when introduced into secure production environments.

The fundamental architecture of MCP as it stands clashes with standard enterprise security practices. Servers placed inside the corporate network must allow inbound connections from a broad range of external IP addresses as those used by Anthropic are subject to frequent change. This forces network and security teams to make significant changes to firewalls, open pathways through demilitarised zones, and update reverse proxy configurations. Even organisations equipped with tools such as WAF (Web Application Firewalls) or SASE (Secure Access Service Edge) platforms must invest considerable time in testing and fine-tuning these adjustments. Any error in configuration can create security gaps or disrupt existing business systems, leading to operational instability.

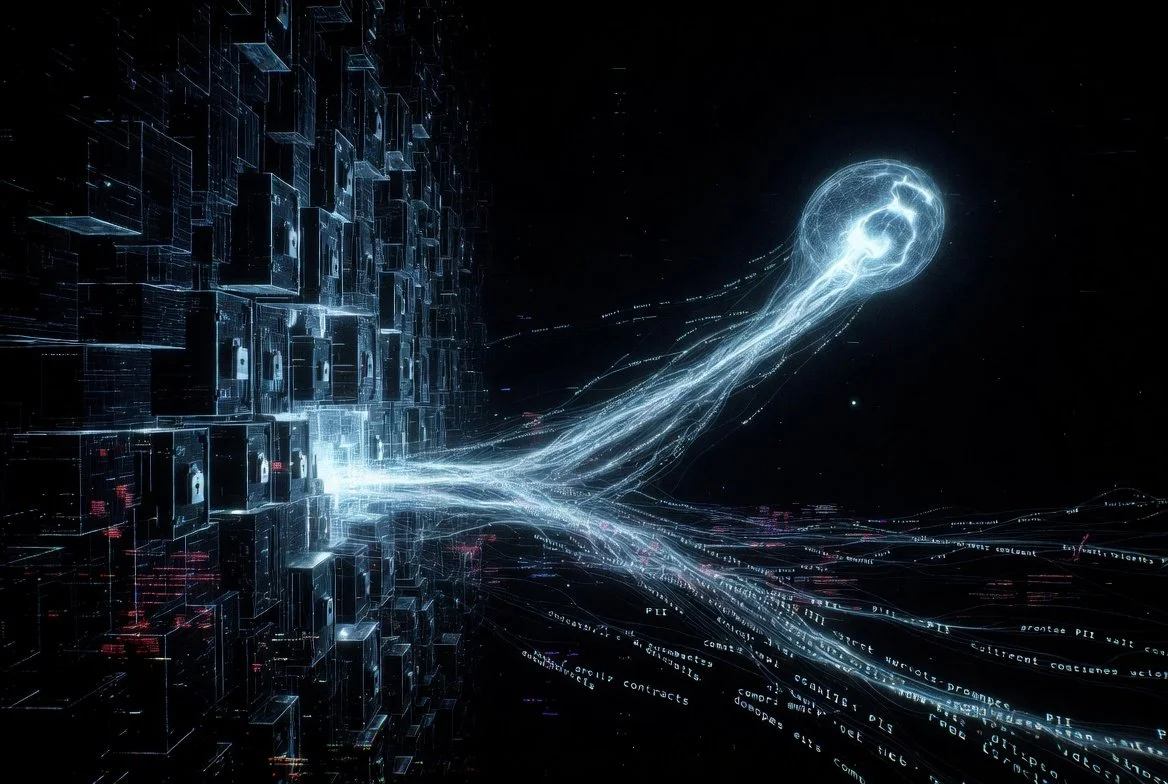

This is without considering the problems of outbound traffic. When users interact with MCP through desktop applications, internal websites, or automated processes, prompts, attached documents, and supporting context flow straight to Anthropic servers over encrypted TLS connections. Corporate monitoring, logging, and data loss prevention tools cannot easily inspect this traffic. This lack of visibility means security teams have no reliable way to know what sensitive information is leaving the organisation. The result is a significant blind spot in data protection strategies.

Anthropic does offer tenant isolation that keeps customer data separate from model training datasets. While helpful for long-term privacy, this measure does not address risks during live use. Employees or AI agents can include confidential files, customer records, or proprietary information in their prompts without any internal review or blocking mechanism. For companies in regulated industries such as financial services, healthcare, and legal sectors, this exposure represents a major compliance vulnerability. Breaches of data protection rules can lead to heavy fines, damaged reputation, and loss of customer confidence.

Organisations facing these issues today can take some comfort knowing that improvements are on the horizon. Anthropic is understood to be working towards more enterprise-friendly architectures for the MCP protocol in future releases. In addition, NVIDIA’s recent announcement of a trust layer for AI agents and integrations offers promising new options for governance, security guardrails, and verifiable controls around data flows. However, these advancements are not yet fully rolled out or battle-tested across all environments, leaving many companies to navigate the realities of today’s MCP implementations while planning ahead.

Smaller and mid-sized companies are particularly vulnerable. Unlike large corporations that maintain dedicated architecture and security design teams, many organisations operate without this specialised support. They lack the internal expertise needed to properly evaluate MCP’s impact on their existing infrastructure. As a result, AI projects often face long delays while teams debate risks, design makeshift solutions, or seek external help. The situation is worsened by poor documentation. Knowledge about network changes, data flows, and security controls remains scattered across different departments and individuals. This fragmentation leads to repeated mistakes, slow troubleshooting, and difficulty proving compliance during audits.

As AI adoption moves from small pilots to wider deployment, these problems intensify. Each additional use case adds more inbound and outbound connections, increasing complexity and risk. Teams spend more time managing exceptions than delivering business value. Leadership faces growing uncertainty about whether the organisation can safely scale its use of powerful AI capabilities. Auditors and regulators increasingly ask tough questions about data flows that the company cannot fully answer. The absence of clear system understanding turns promising technology into a source of ongoing concern rather than a strategic advantage.

At the heart of the issue is a lack of control and visibility. Without proper insight into how data moves through MCP integrations and without reliable records of system architecture, organisations struggle to balance innovation with necessary security and governance. Many businesses find themselves caught between the desire to leverage AI and the practical difficulties of doing so responsibly.

Optica Technology Design helps companies address these exact challenges. We start by gaining a deep understanding of the tools, systems, and security measures already in place before delivering clear recommendations and support.

Our mission is to bring exceptional clarity and structure to technology environments that power modern organisations. We make complex systems understandable and manageable so engineering, operations, compliance, and leadership teams can move forward with confidence.